Abstract

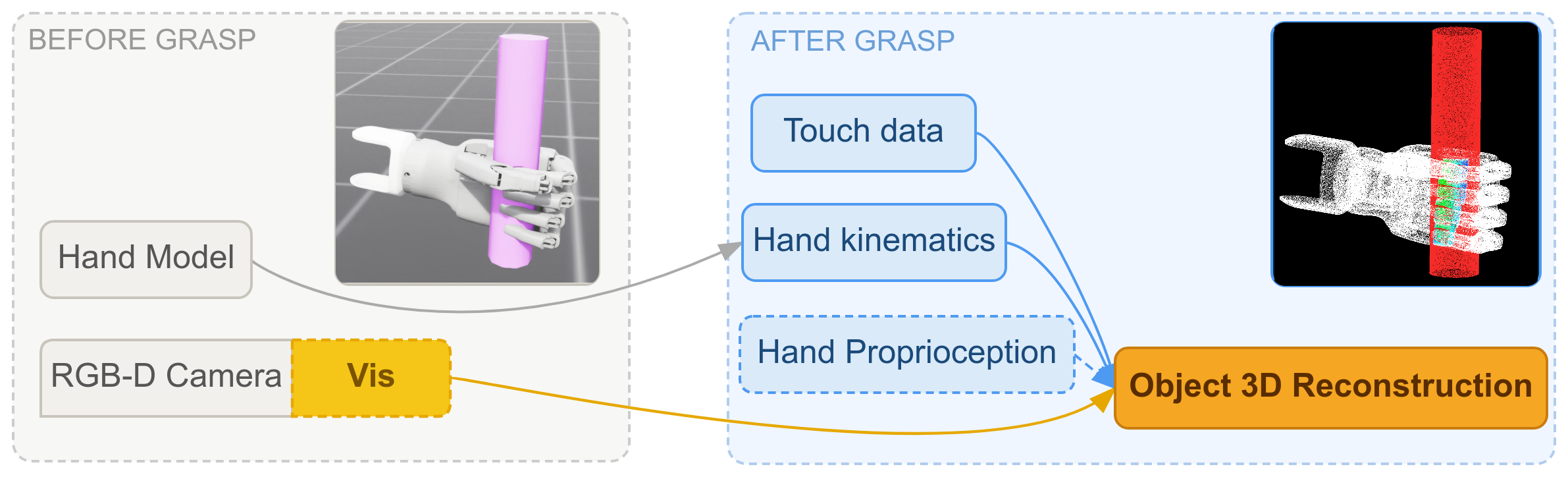

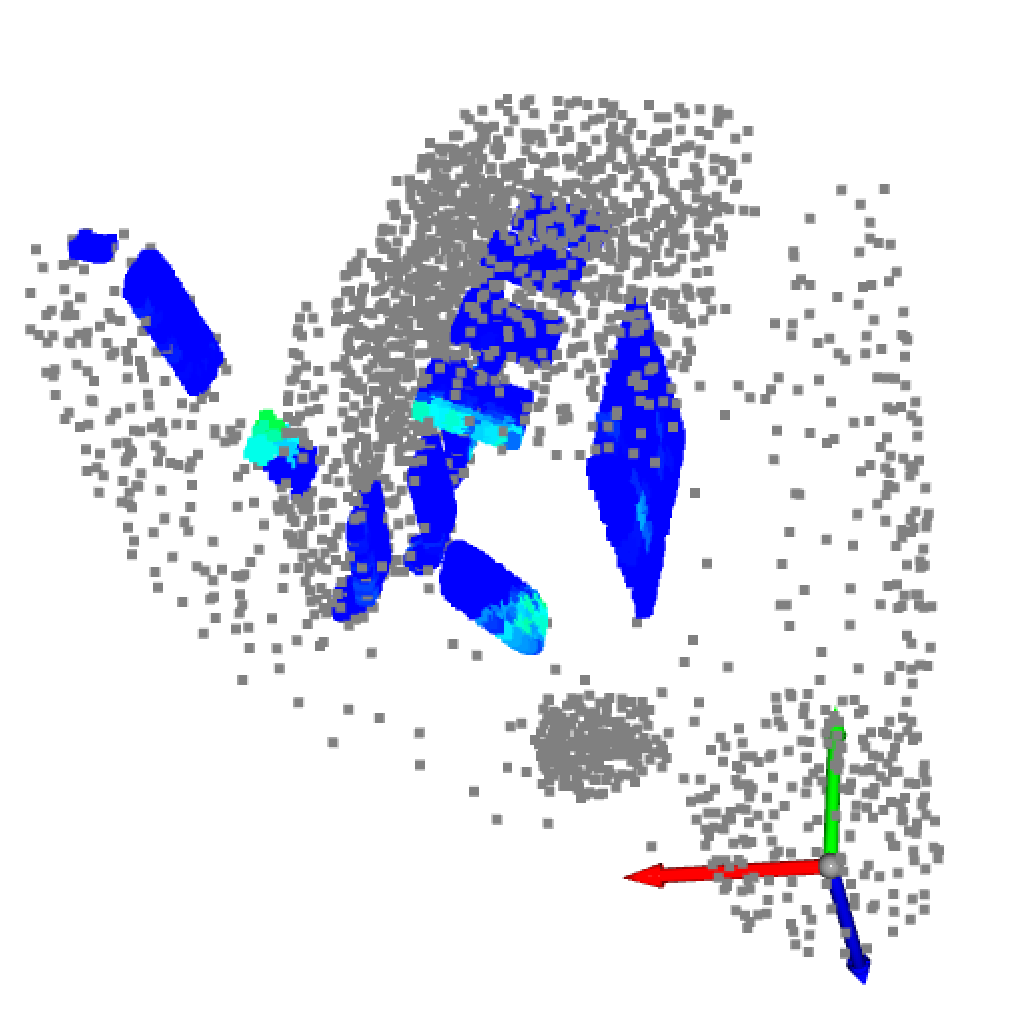

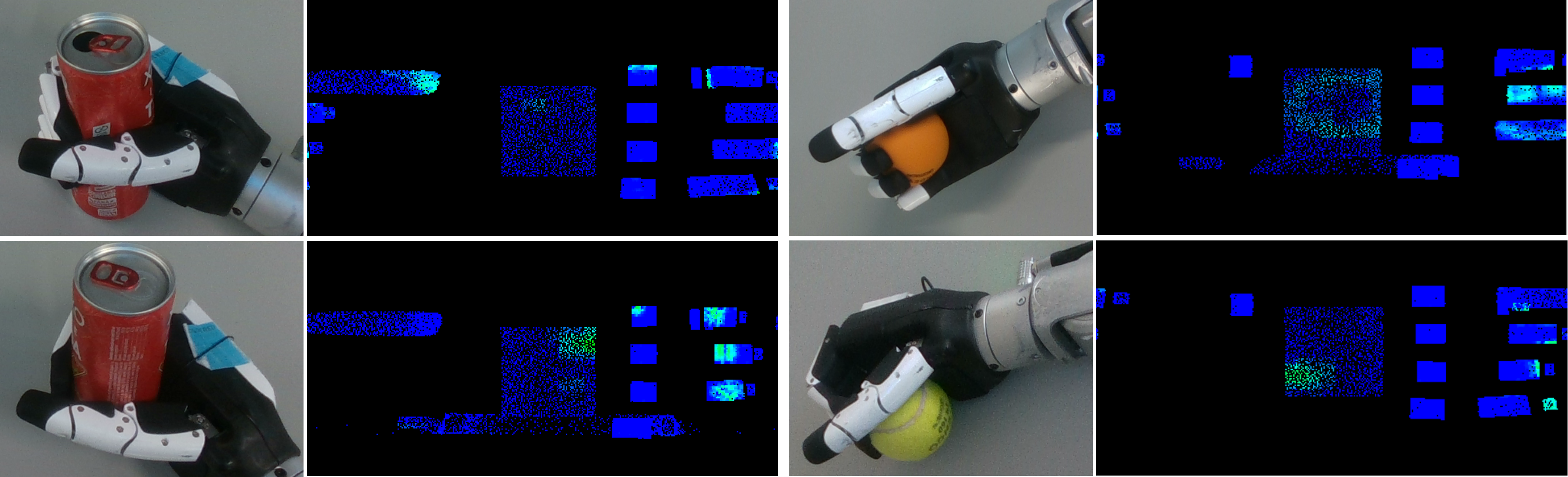

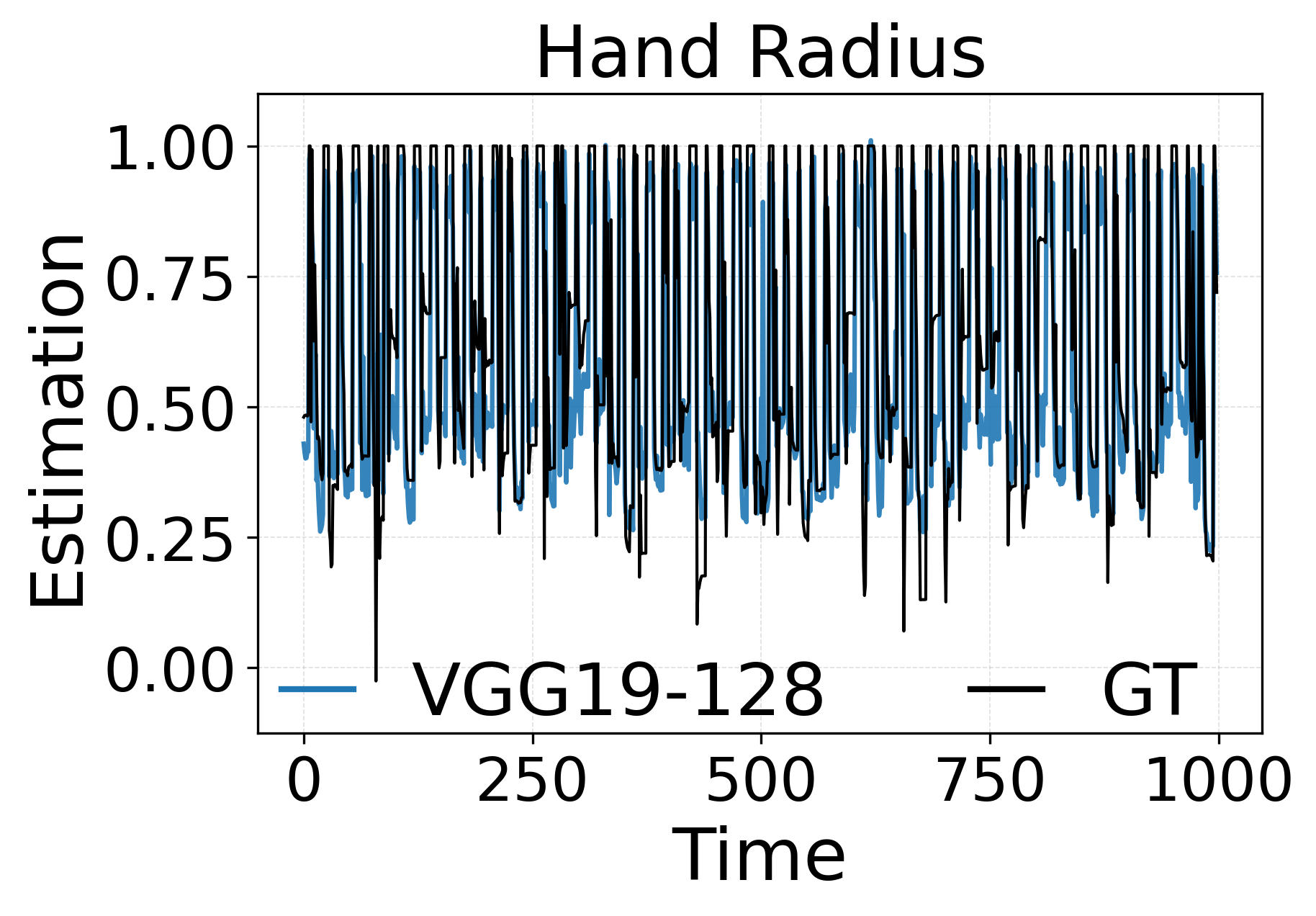

Reconstructing 3D deformable surfaces during grasping can be a challenging task even though tactile input can provide high fidelity spatial information. The complementary characteristics of visual and tactile data remain an unexplored domain for deformable surface reconstruction. In this paper, we study the problem of surface reconstruction with complementary visual and tactile information based on 3D geometric priors for grasped objects.

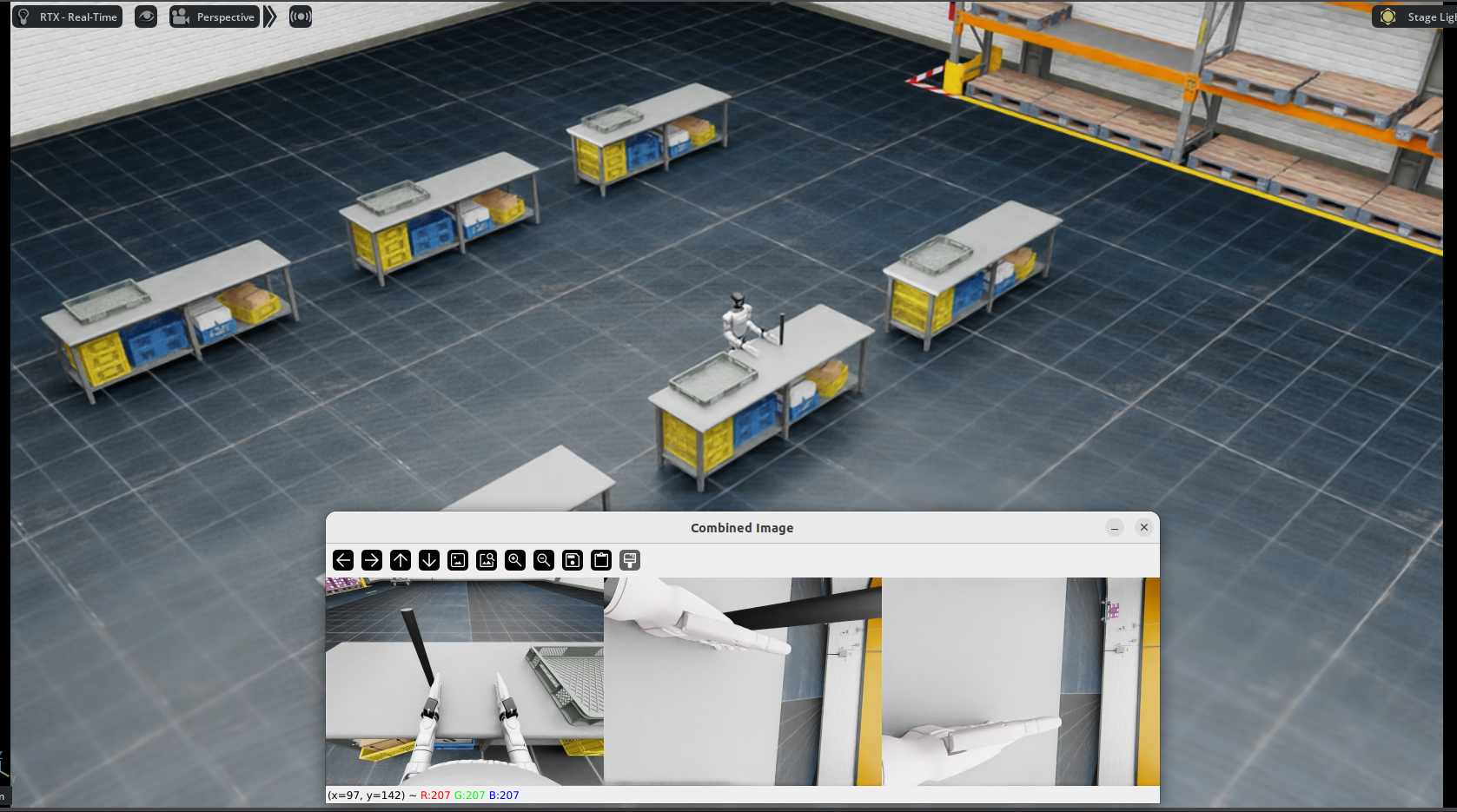

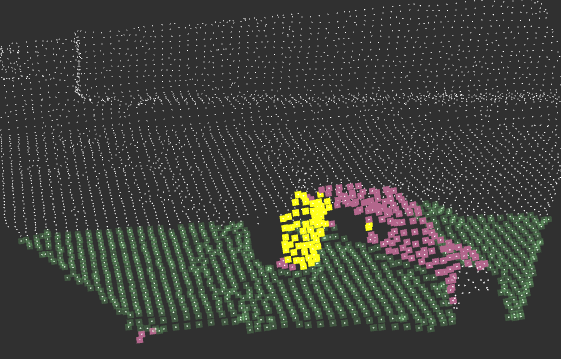

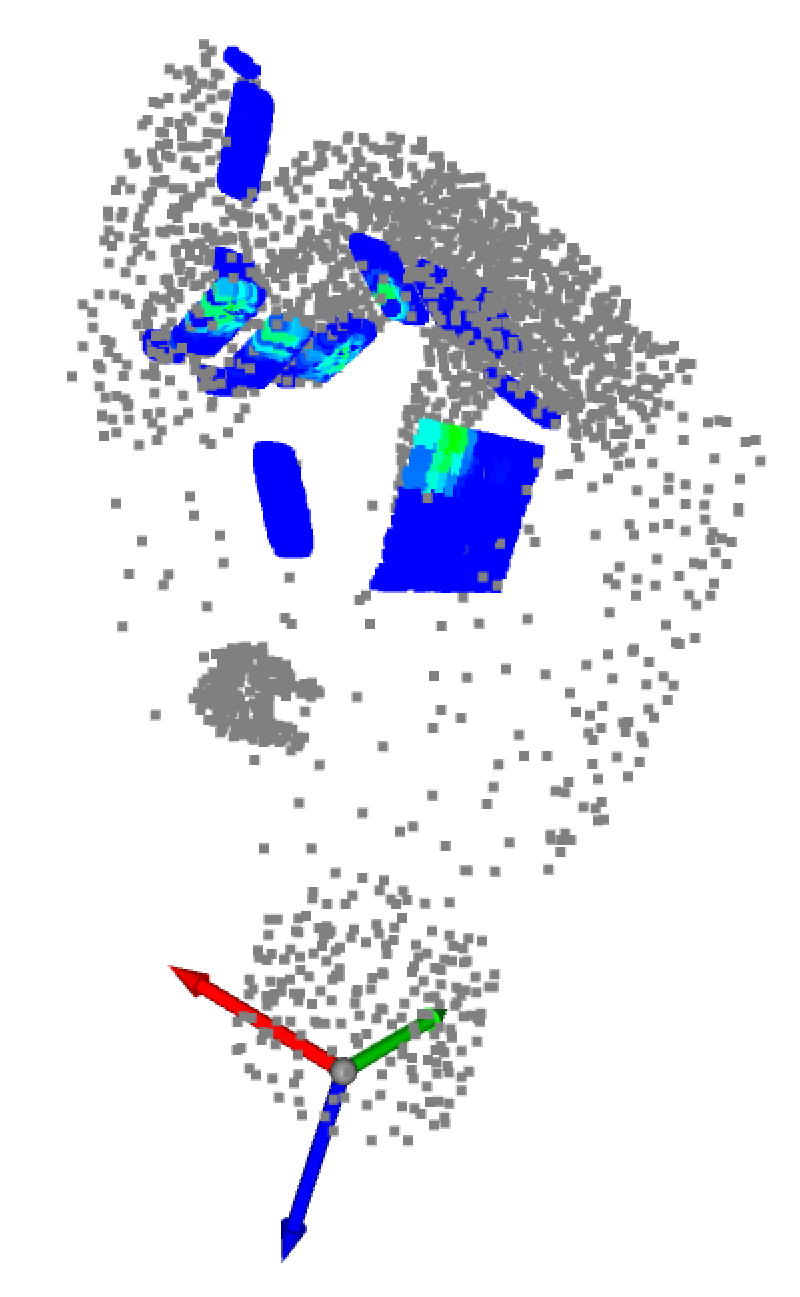

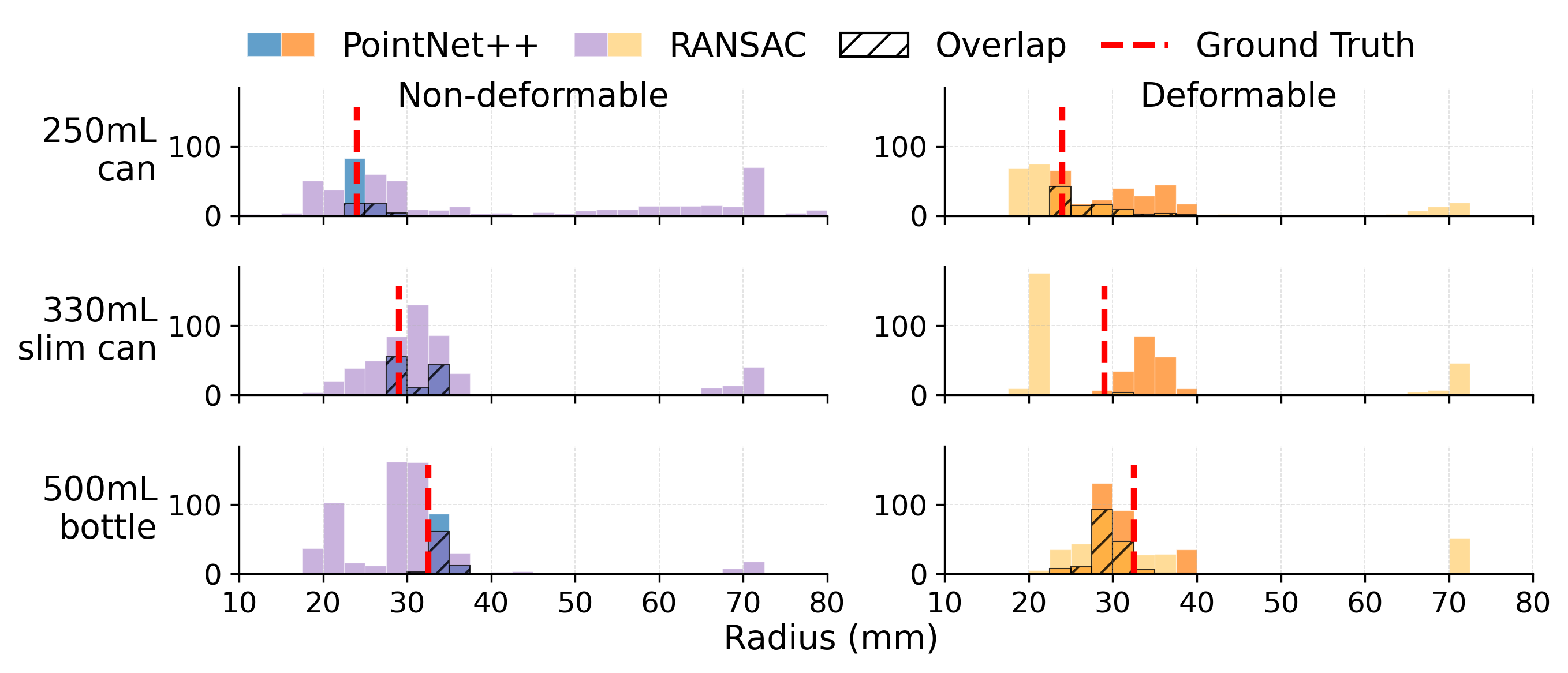

We first recover the rough geometric shape of the object (cylinder or sphere) in a table-top scenario using a sampling approach for depth data. Based on the prior geometric shape and the spatial distribution of the touch input, we recover the deformable surface form by fusing the visual and tactile data in 3D. Our results show that this approach enhances 3D reconstruction by exploring the spatial distribution of visual and tactile input.